[컴선설] Lec 04-2 Least Square solution

[컴선설] Lec 04-2 Least Square solution

Three methods of approaching least-square

Partial derivative

- Let error function as total squared sum of residual of each term

- Each partial derivative term must be zero

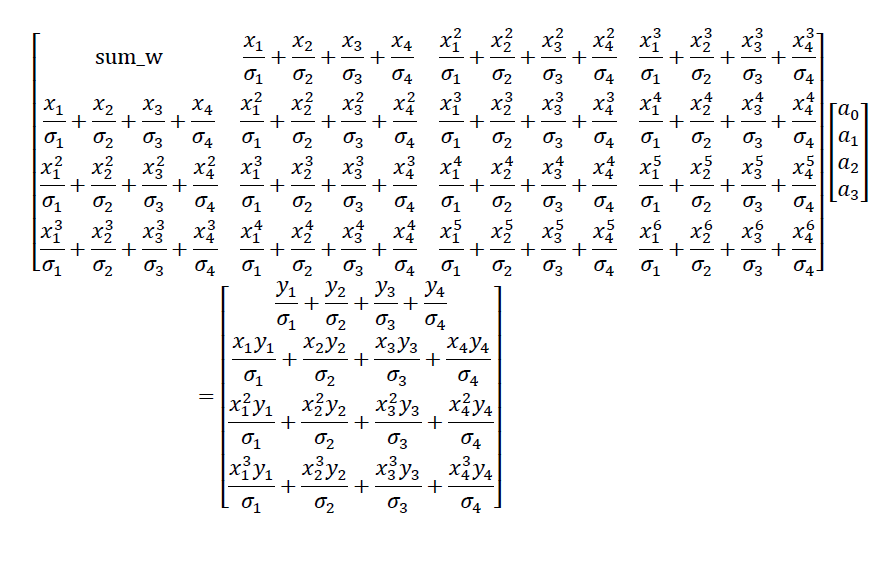

- Collecting all results and construct a matrix

Matrix

- Construct a matrix $A$ with given condition

Likelihood

- Let the likelihood of Follows Normal distribution

- Maximum likelihood → maximizes probability : minimizes exponential term

Weighted maximum likelihood approach

- Each term $f(x_i) \sim N(y_i, \sigma_i^2)$ (with different stdev

- Consider a matrix $W$

- which is…

with all sigma must be changed to sigma^2

$\sigma_i \rightarrow \sigma_i^2$

This post is licensed under CC BY 4.0 by the author.

![[컴선설] Lec 04-2 Least Square solution](https://note.celenort.site/assets/img/2026-04-16-[컴선설]-Lec-04-2-Least-Square-solution/0-6445c1625b.png)